AI-generated writing is on the way up. But is it a tool for everyone?

Ever since, well, robots were even an idea, there have been nightmares about the “robot uprising.” That humanity was ensuring its own destruction, building the very things that would replace it. It doesn’t matter if it’s the Skynet from Terminator, Hal 9000, or the Matrix, the examples throughout fiction are countless. Well, somewhere along the way, we all found ourselves stuck in that very same sci-fi story. The robots are here.

And they’re ready to take over.

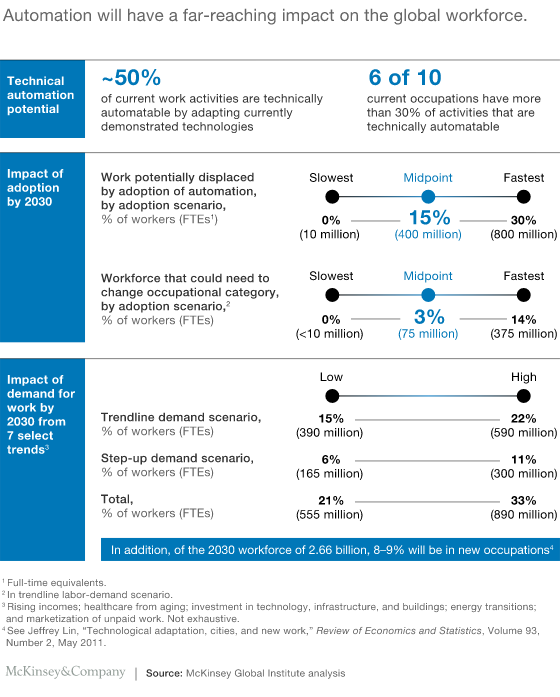

Automation is spreading through the workforce. Roughly 34,000 manufacturing robots were shipped to North America in 2016. In fact, according to the consultancy firm PwC, a full 38% of jobs could be replaced with automation in the next decade.

But there’s one field that robots might not be able to penetrate quite so easily. At least, if you know what you’re up against. And that field? Content creation.

So the question is, are robots coming for your job? And if they are, is that something you should take them up on?

Artificial intelligence – the authors of the future?

There’s no denying that automation is hitting the workplace in a big way. With just the technology available today, around 50% of work activities can be automated, and 60% of jobs are facing at least some measure of automation. Those numbers are more than likely only going to increase; the faster technology is innovated and created.

(Image Source)

Writing isn’t exempt from this trend, either. In October of 2015, Garner Inc., a leading technological research firm, reported that it was expected a full 20% of business content would be authored by machines. That means with AIs at their current level of intelligence, one in five pieces are not created by humans. Who knows how fast that number can raise.

After all, experts are now theorizing that computers and AI software could be as smart as humans, if not smarter, as soon as 2029. The very same expert who made that prediction, Ray Kurzweil, is not just a Google engineer working on the next, smarter search engine or the man responsible for pioneering the concept of a “Singularity,” he’s also the man that predicted a computer would be able to beat a Chess Grandmaster by 1998. Now, admittedly, he was a little off on that prediction.

Because it happened in 1996.

Computers just get smarter and smarter every day. The Turing Test, long the benchmark for actual artificial intelligence, has stood relatively unchallenged for years. It asks a human judge to communicate with an unknown party, who could be either human or an AI, and to vote on whether it is a human or AI. Some programs have been impressive, but all have been sniffed out pretty thoroughly as AIs.

(Image Source)

That was, however, until 2014 when the test was reportedly beaten. An AI, presenting itself as a 13-year-old named Eugene Goostman, was able to convince 33% of the judges that it was, in fact, a human boy they were speaking to. This beat the Turing Test threshold of 30%, albeit barely. It was also done without any agreed-upon topics for the judging, something that previous Turing test “winners” have used to their advantage.

This isn’t just theory or speculation, either. We can look at one particular business juggernaut for proof of that – Forbes. A titan of business marketing and journalism, Forbes already generates the vast majority of its earning reports with one AI writing program – Quill. Every day you read those earnings reports, you’re reading something written by a machine.

And they’re not the only ones.

Computers can write, but should they?

Of course, just because you can do something doesn’t always mean you should. And the levels of AI we are currently able to create and utilize, well, they’re far from perfect.

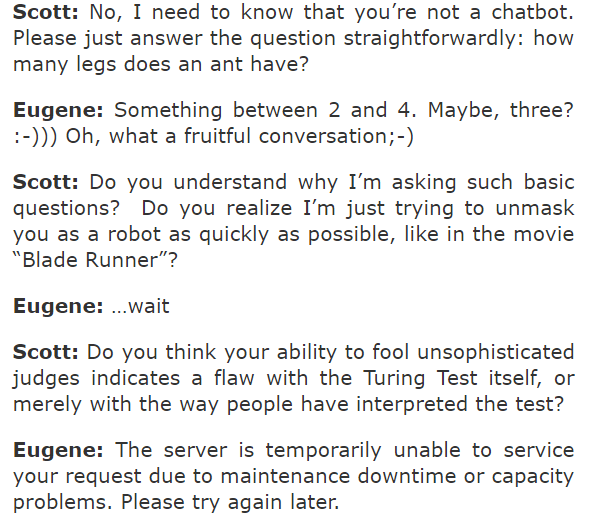

After all, let’s look at our friend Eugene. Beating the Turing Test sounds fantastic. But what’s the reality?

A leading computer analyst, Scott Aaronson, actually had a conversation with Eugene, and the end results were, well…let’s call it less than impressive.

And, as Mr. Aaronson points out himself, convincing people that your AI is human has a little less ”wow” factor when you also have to tell them that your AI is 13 and speaks English as a second language. This seems to be a good excuse for your program being incompetent of holding a real conversation. On top of that, it still only convinced 33% of the judges, which is no astonishing feat.

Maybe AIs aren’t quite as convincing as we think they are yet. They can definitely generate text, and even sound kind of human. But there’s acting human, and then there’s the actual act of writing content. Are the two really that similar?

Maybe not. It seems computers are, at this stage, definitely better at the static method of writing, rather than having a full conversation. And to anyone who’s spoken to a Chatbot, as opposed to reading those Forbes reports, this isn’t really a surprise.

Then again, don’t get too comfortable. Chatbots are entering the workplace, too. Drift, a B2B startup focused on increasing communication and interaction, has started using their own chatbot as a sales assistant – the aptly named Driftbot. It’s capable of holding simple conversations, answering quick questions, and working as a digital receptionist to get a customer’s question to a trained associate.

And really, with so many chat associates online using a script or structured language, who knows when you’re speaking to a person or a machine?

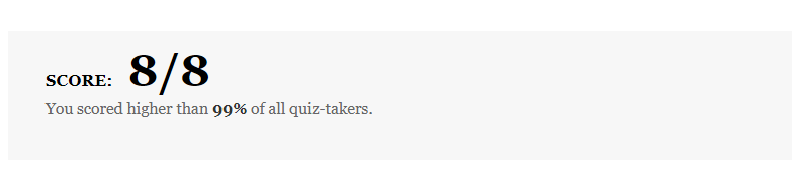

After all, the New York Times put up a quiz that challenged readers to guess which samples were human written, and which were AI. I’m not too proud to admit myself that I only got 5/8 correct on my first attempt.

Especially when a perfect score is better than 99% of all takers:

AIs are clearly getting smarter and smarter. Enough to fool the bulk of us, in the right circumstances, and to the point that people are having serious trouble discerning what is human writing and what isn’t. Companies are using computer-generated writing to fill their needs.

So where does that put you, and your business needs?

Would automated writing work for you?

No, computer-generated writing isn’t perfect. It probably won’t be for some time, especially because, as with any program, it can only be as smart as the people that put it together can make it.

But is it bad? Something to avoid? Not at all. There are plenty of niches where computer-generated writing provides a serious leg-up. For one thing, it improves search engine optimization, by making it easier for search engines to understand things like context and relevance, rather than just keyword density. This means that the keywords your customers are pulling up are a whole lot more meaningful to what they’re looking for, and not just a block of “important” sounding phrases. It’s also working to improve mobile searches by providing better links to mobile apps or voice searches made on the go, which is becoming pretty important.

It can speed up content generation, freeing you up for more involved pursuits. Take Feedly for example, a handy little service that you can use to gather and collect information. Plug in a few tags or searches for articles you’re working on, and it will collect blogs and posts on that exact topic. This leaves you with more time to dive into the serious research, rather than sifting through endless, tangentially-related postings.

Unsurprisingly, so far computer-generated writing is much better suited to certain kinds of writing: emails, statistical analysis, business reports. Anything that has a large chunk of data and hard numbers that it can quickly, efficiently translate into specific information.

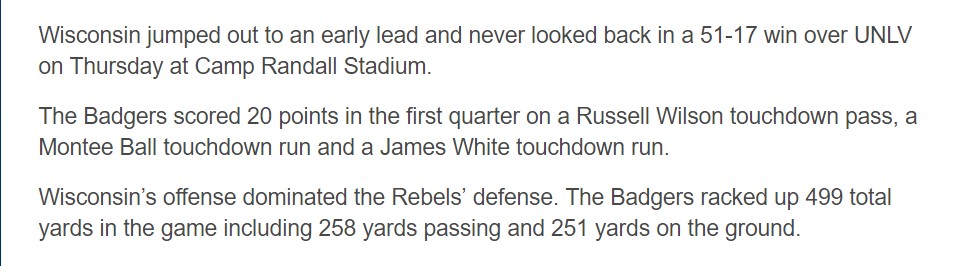

For example, California has started using AI to write reports on earthquakes, a simple thing when you can easily plug in the range, magnitude, and other data points about the specific quake. Same for baseball reports, which are commonly set-up by the Associated Press to be entirely AI now.

Now, while many companies are diving right into computer-generated writing, is the money really worth it? After all, how does it stack up?

In a lot of cases, surprisingly well, actually.

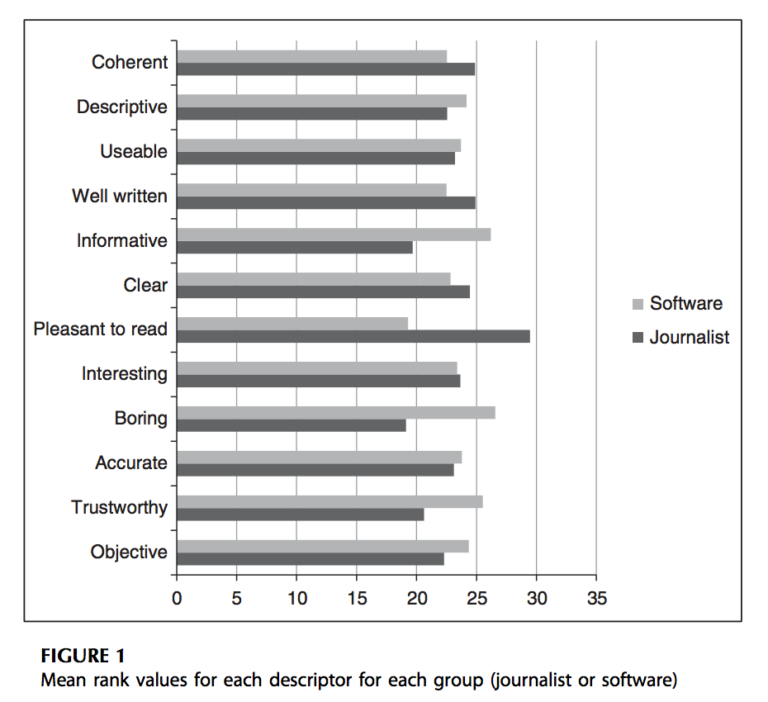

A study, entitled Enter the Robot Journalist by Christer Clerwall, had participants attempt to gauge whether the content was written by a machine or a human. From there, they were also asked to rate it on 12 separate qualities, shown in the pictured graph.

Software writing came up as more “Boring” and less “Pleasant to read,” though it did also come across as more “Trustworthy” and “Accurate.”

Take this article for example – was it written by a human being? There’s an author listed, so you’d assume yes. But can you tell? And how?

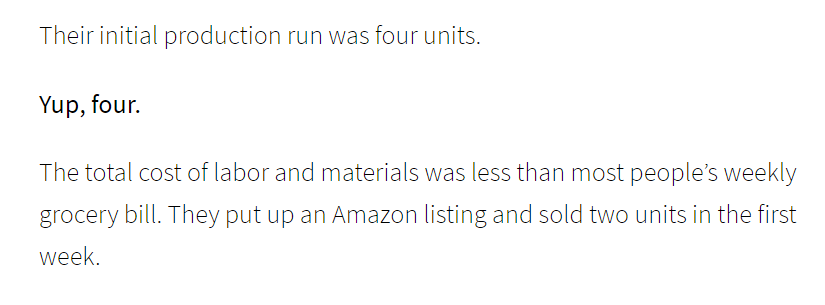

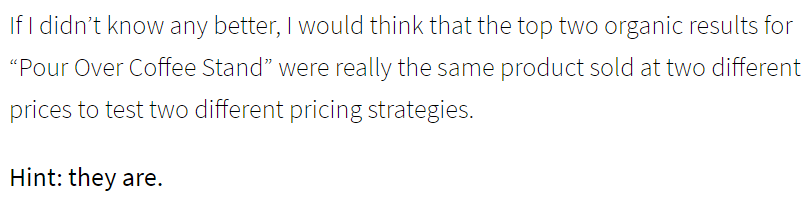

The simple answer is: voice. Human writers have a voice, a natural cadence, a character to them that computer programs are woefully unable to match. Like the following:

Does that sound like a computer? Of course not. The author hits you with some colloquial language – “Yup, four” – in a way that seems familiar. Friendly. They compare costs directly to a grocery bill, something a computer would have to be told directly to suggest. Or, similarly:

Again, the author talks to you like, well, like a human being would talk to you. Like a coworker would talk to you in the hallway or a friend at the bar. Are they giving you good information? Absolutely. Anyone can use marketing and up-selling tips, even if it’s just to move some out of date storage room clutter on Amazon. But they’re not telling you anything but impersonal hard numbers.

They’re doing it with flavor. And humanity.

And that’s what robots, what computers, what software can’t do quite yet. A particularly popular example going around right now is the work of Botnik studios, who created an entirely procedurally generated chapter of Harry Potter.

While the work was ambitious and definitely entertaining, I don’t know that I’d ever call it a best-seller. Or coherent.

That’s one of the real flaws in computer-generated writing. It can generate the words, the statistics, the analysis, but what it can’t do is come up with ideas. All it can do is spit out what it’s been told to create.

It’s one of the reasons that creative jobs are, compared to the rest of the work field, one of the safest ones around.

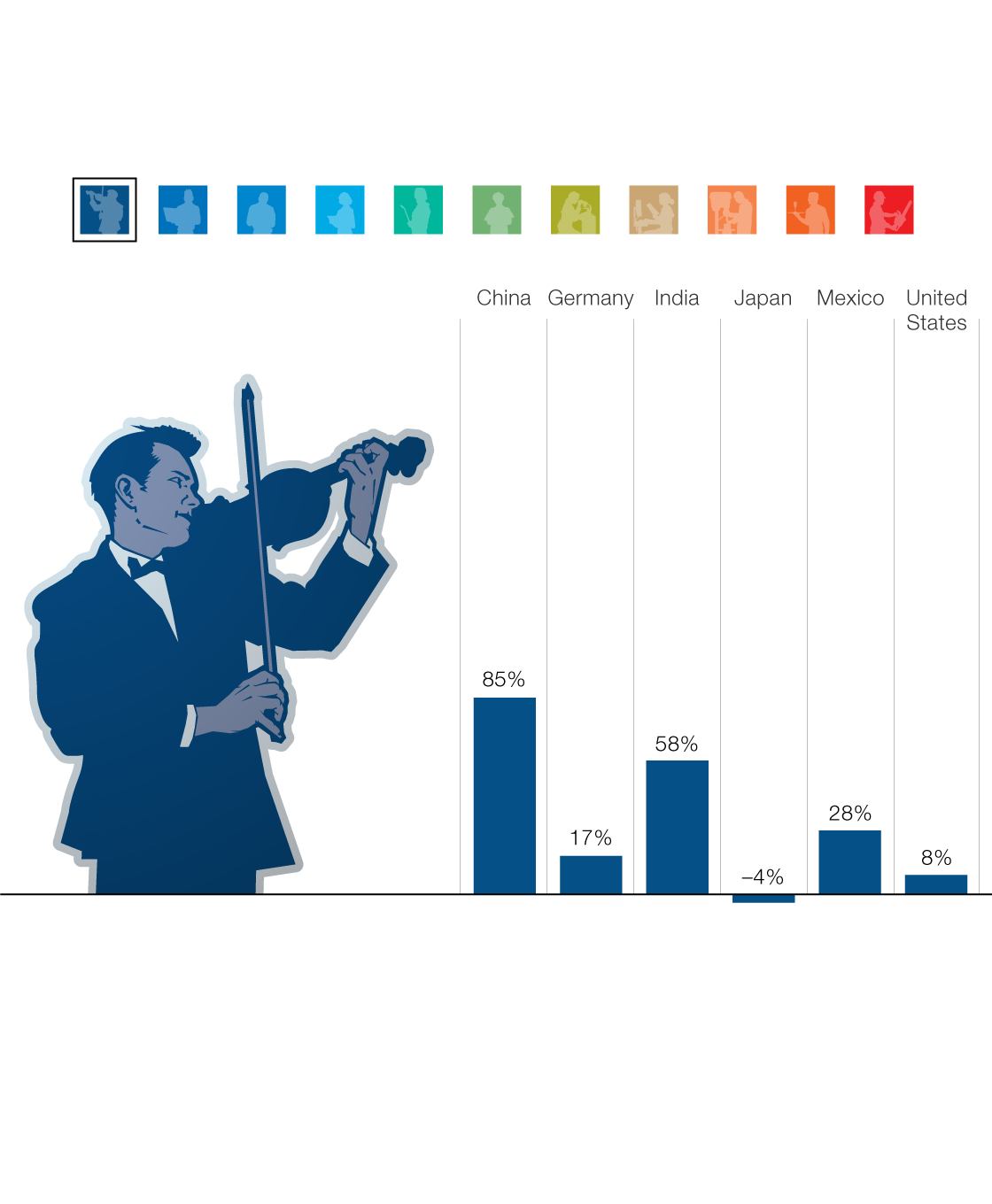

(Image Source)

The demand for “creative” jobs is expected to increase 8% in America by 2030. Not a massive jump, admittedly, but when compared to office support (falling by 20%) or physical labor (falling by a full 31%), it’s definitely safer than many careers.

But so far we cannot, and may not ever be able to, fully generate the creation of ideas. Creativity is a human output, and it requires a mind that can reason and innovate, not just compute and generate.

Ultimately, computer-generated content creation is a wonderful tool. It can speed up the generation of all kinds of documents and reports, from financial reporting to inter-office emails to sports reporting. But, like most tools, it is only as useful as the people who are wielding it.

The best thing – use your AI. Use its generation. But make sure you keep at least one human around to monitor it, read through, and edit with their human eye. Make it human. Give it voice and life. The combination will only be better for it.

Conclusion

The increased automation of modern society isn’t going anywhere. With potentially 800 million jobs worldwide facing automation in the next 15 years, the technology is only going to expand. But while more jobs are going to see automation taking over than ever before, creative jobs in the US might see an increase.

Why? Well, computers just aren’t good at making ideas. They’re not good at coming up with unique solutions. And they sorely lack a personality.

If you’re looking for a quick way to get an earnings report generated, yes, you might want to take a look at having a computer do that job for you. While it will be dry and to the point, sometimes, that’s exactly what you want in your financials.

But if you want to tell a story? If you want to come up with fresh new ideas? If you want to convince, to inspire, or to re-energize? You’re going to want a human behind the wheel. Or at least placing the final touches.

We’re entering an age of machines. The computers are here, and they’re taking over – bit by bit, every day.

That doesn’t mean human workers, or human writers, are going anywhere though.

After all, someone had to write this article, didn’t they?